Implementing AWS S3 Lifecycle Policy

This article provides a detailed overview of implementing AWS S3 Life-Cycle Policy and how they can assist in minimizing data loss. At the end, you’ll get a better understanding on retaining and keeping critical data completely secured leveraging S3 lifecycle policies.

Minimizing Data Loss: Implementation of Lifecycle Policies

Why go with Lifecycle Policies?

- Lifecycle policies in S3 are one of the best ways of making sure that all data is being maintained and managed within safe environment.

- Without undergoing any unwanted costs, the data is being cleaned up and deleted the moment when it is no longer required for use.

- Through lifecycle policies, you will get the chance to directly review objects found inside the S3 Buckets you own & migrate them to Glacier (or) instead delete the objects permanently from the bucket.

LifeCycle Policies are typically used for the following reasons:

- Security

- Legislative compliance

- Internal policy compliance

- General housekeeping

With the implementation of some good lifecycle policies you will receive the following advantages:

- Improved data security.

- Ensure that no retainment of sensitive information is being made for a time far longer than what is necessary.

- Easily archive data into Glacier storage class leveraging few extra security features whenever required.

Glacier: This class is mainly used as a cold storage solution for any type of data that might be retained once in a while. This class is commonly used as cheap storage service compared to that of S3.

- Lifecycle policies are implemented at the Bucket level and can have up to 1000 policies per Bucket.

- Different policies can be set up within the same Bucket affecting different objects through the use of object ‘prefixes’.

- The policies are checked & ran automatically — no manual start required.

- Be aware that lifecycle policies may not immediately executed after initial set-up as the policy need to propagate across the AWS S3 Service. Very important when starting to verify your automation is live.

These policies are implemented either AWS-Console (or) S3 API.

How to set a Lifecycle Policy via AWS-Console?

The process of setting up a lifecycle policy within S3 can easily be done through the following couple of steps:

- Sign into the Console and choose ‘S3’.

- Go to the Bucket which you would like to implement the Lifecycle Policy for.

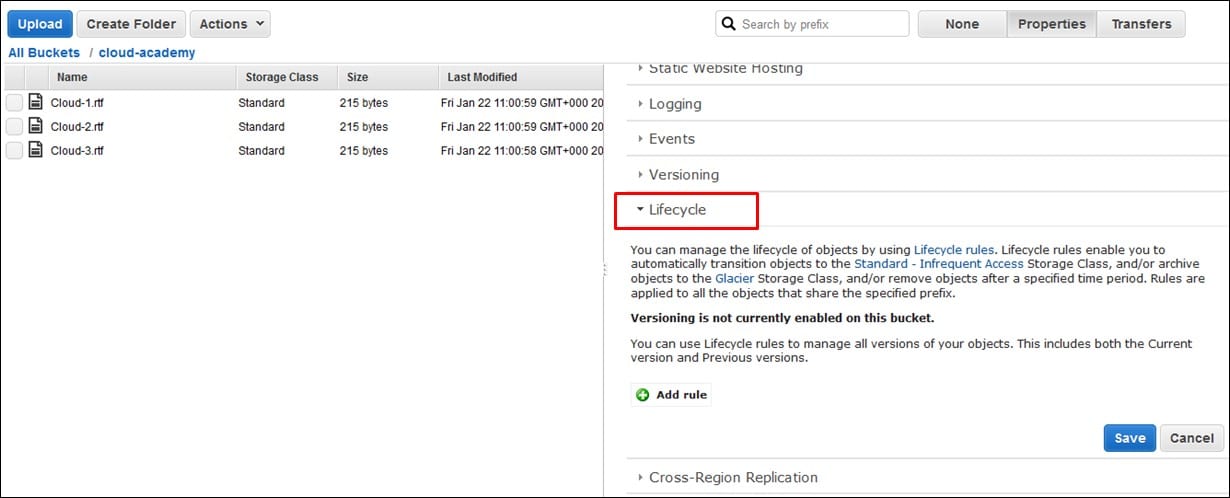

- Click on ‘Properties’ and then ‘Lifecycle’.

AWS S3 Lifecycle Policy – how to set a lifecycle policy

- Start adding all the rules that you would like to make for your policy. As seen in the picture above we have no rules that are set up yet.

- Select Add rule.

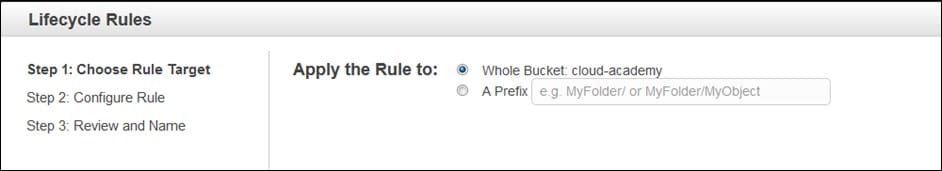

AWS S3 Lifecycle Policy – lifecycle rules

- Now you may start setting up a policy for Whole Bucket or simply for a prefixed object(s). For choosing prefixes, various policies may be set up inside the same Bucket.

- A prefix may be set for a subfolder inside the Bucket, or a selected object, that may offer you better refined set of policies.

- For our example here, let’s choose Whole Bucket then click on Configure Rule.

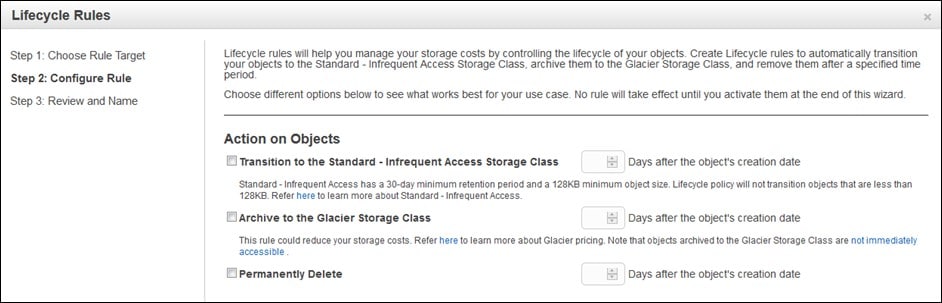

AWS S3 Lifecycle Policy – configure rules

Here we will get three options to pick one from:

- Moving your object to Standard – Infrequent Access

- Archiving your object to a Glacier

- Permanently Deleting your object

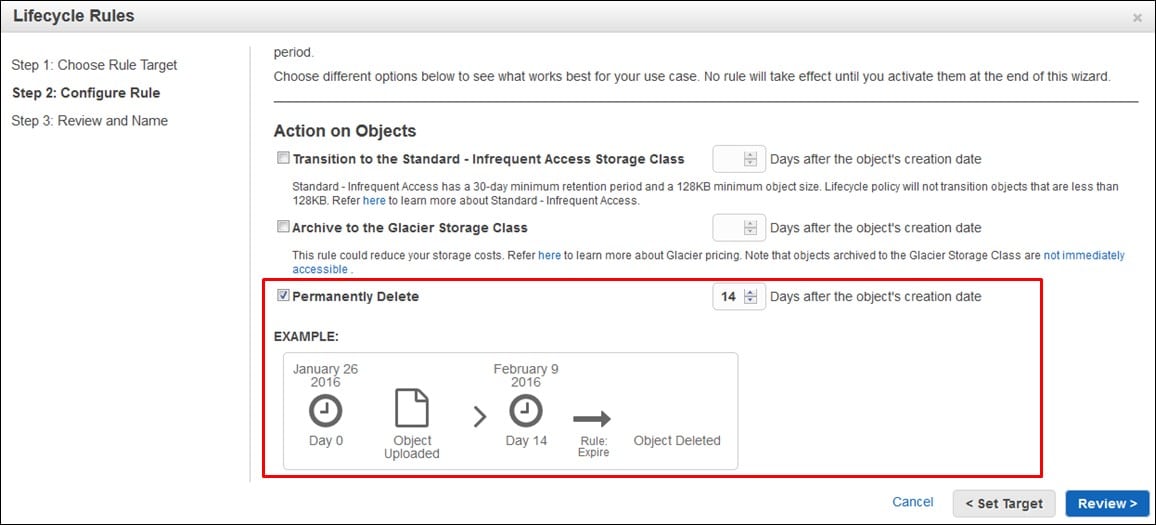

For example, let’s choose Permanently Delete. Sometimes, for Security reasons, you might need this data to be removed after the passing of two weeks from its creation date, so enter the number of days and click on Review.

AWS S3 Lifecycle Policy – permanently delete

- Choose a name for your rule then review everything. If you find that all is well, select Create and Activate Rule

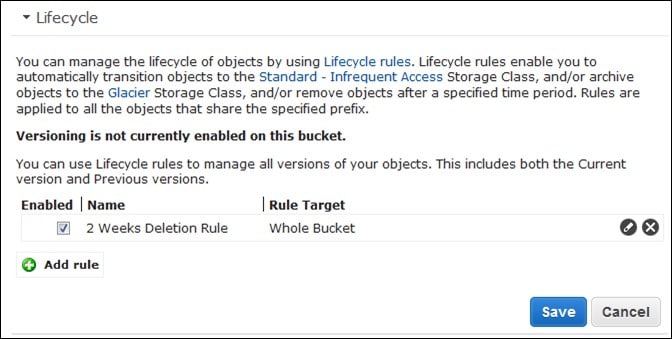

- Under Lifecycle section you shall see the new rule which has just been created by you.

AWS S3 Lifecycle Policy – lifecycle add rules

- The objects will start adopting this newly created policy for all objects within that Bucket & force the rules upon them.

- In case there were any objects in your Bucket that is older than the number of days you’ve chosen, they are directly going to be deleted the moment that the policy gets propagated.

- If new objects are created inside the bucket the same day now, it shall be deleted automatically after the passing of your number of selected days.

By this measure, you can make sure that no sensitive or confidential data is being saved unnecessarily. It will also pave way to the reduction of costs through automatically getting rid of unnecessary data from your S3 bucket. This then makes it a win-win situation.

- In case you went with the option of archiving your data into a Glacier for specific archival reasons, you would have been able to make use of having cheap storage, of $0.01 per Gig, in comparison with S3.

- By this you may have been able to get the chance to keep a tight security throughout the utilization by IAM user policies and Glacier Vaults Access Policies (accepts or rejects access requests coming from various users).

- Glacier will also pave way for the availability of WORM compliance, Write Once Read Many option, by using a Vault Lock Policy. It essentially steps out to freeze your data and deny the possibility of any future changes being made.

Lifecycle policies will aid you in your quest to manage and automate the life of objects that are stored in S3, whilst at the same time, ensuring compliance. Those policies allow the possibility of selecting cheaper storage options. Also, at the same time, it lets you adopt even more security control from the Glacier class.

Here are few awesome resources on AWS Services:

AWS S3 Bucket Details

AWS S3 LifeCycle Management

AWS S3 File Explorer

Setup Cloudfront for S3

AWS S3 Bucket Costs

AWS S3 Custom Key Store

CloudySave helps to improve your AWS Usage & management by providing a full visibility to your DevOps & Engineers into their Cloud Usage. Sign-up now and start saving. No commitment, no credit card required!